This AI Paper Introduces WEB-SHEPHERD: A Process Reward Model for Web Agents with 40K Dataset and 10× Cost Efficiency

Web navigation focuses on teaching machines how to interact with websites to perform tasks such as searching for information, shopping, or booking services. Building a capable web navigation agent is a complex task because it requires understanding the structure of websites, interpreting user goals, and making a series of decisions across multiple steps. These tasks […] The post This AI Paper Introduces WEB-SHEPHERD: A Process Reward Model for Web Agents with 40K Dataset and 10× Cost Efficiency appeared first on MarkTechPost.

Web navigation focuses on teaching machines how to interact with websites to perform tasks such as searching for information, shopping, or booking services. Building a capable web navigation agent is a complex task because it requires understanding the structure of websites, interpreting user goals, and making a series of decisions across multiple steps. These tasks are further complicated by the need for agents to adapt in dynamic web environments, where content can change frequently and where multimodal information, such as text and images, must be understood together.

A key problem in web navigation is the absence of reliable and detailed reward models that can guide agents in real-time. Existing methods primarily rely on multimodal large language models (MLLMs) like GPT-4o and GPT-4o-mini as evaluators, which are expensive, slow, and often inaccurate, especially when handling long sequences of actions in multi-step tasks. These models use prompting-based evaluation or binary success/failure feedback but fail to provide step-level guidance, often leading to errors such as repeated actions or missing critical steps like clicking specific buttons or filling form fields. This limitation reduces the practicality of deploying web agents in real-world scenarios, where efficiency, accuracy, and cost-effectiveness are crucial.

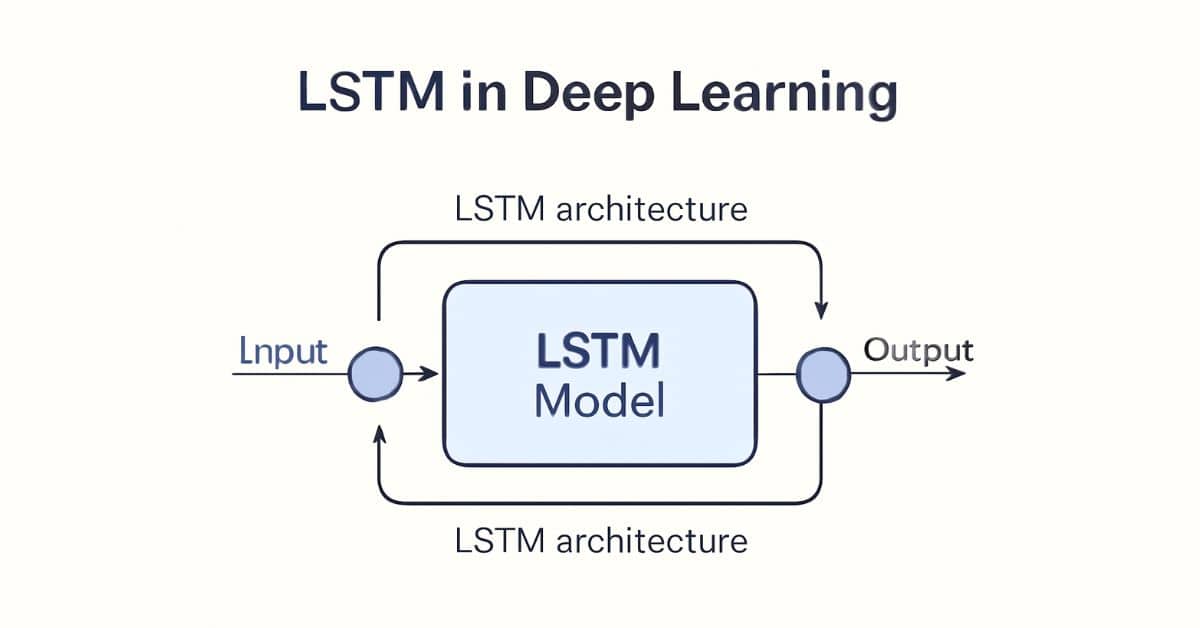

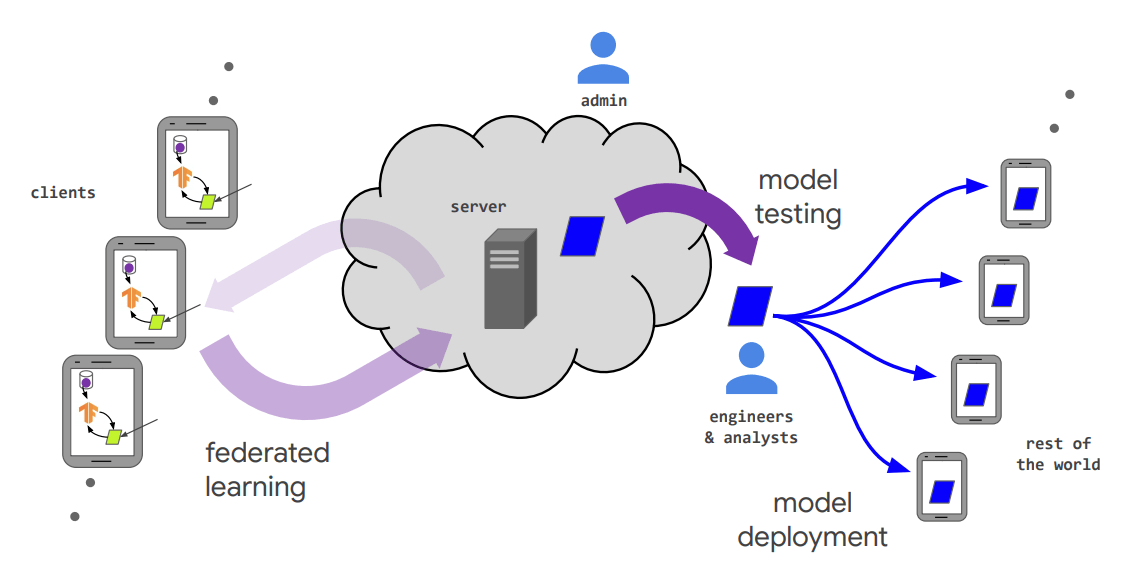

The research team from Yonsei University and Carnegie Mellon University introduced WEB-SHEPHERD, a process reward model specifically designed for web navigation tasks. WEB-SHEPHERD is the first model to evaluate web navigation agents at the step level, using structured checklists to guide assessments. The researchers also developed the WEBPRM COLLECTION, a dataset of 40,000 step-level annotated web navigation tasks, and the WEBREWARDBENCH benchmark for evaluating PRMs. These resources were designed to enable WEB-SHEPHERD to provide detailed feedback by breaking down complex tasks into smaller, measurable subgoals.

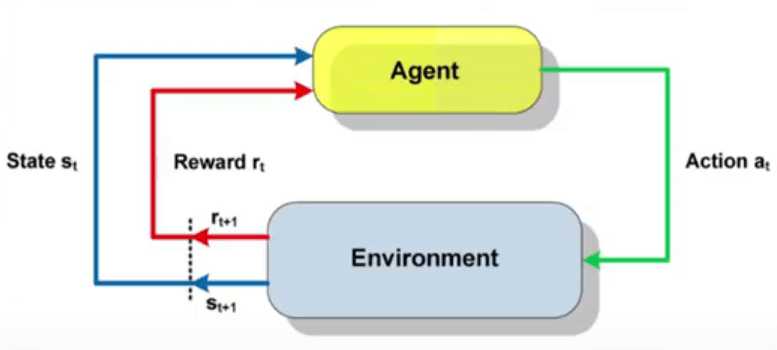

WEB-SHEPHERD works by generating a checklist for each task based on the user’s instruction, such as “Search for product” or “Click on product page,” and evaluates the agent’s progress against these subgoals. The model uses next-token prediction to generate feedback and assigns rewards based on checklist completion. This process enables WEB-SHEPHERD to assess the correctness of each step with fine-grained judgment. The model estimates the reward for each step by combining the probabilities of “Yes,” “No,” and “In Progress” tokens and averages these across the checklist. This detailed scoring system enables agents to receive targeted feedback on their progress, enhancing their ability to navigate complex websites.

The researchers demonstrated that WEB-SHEPHERD significantly outperforms existing models. On the WEBREWARDBENCH benchmark, WEB-SHEPHERD achieved a Mean Reciprocal Rank (MRR) score of 87.6% and a trajectory accuracy of 55% in the text-only setting, compared to GPT-4o-mini’s 47.5% MRR and 0% trajectory accuracy without checklists. When tested in WebArena-lite using GPT-4o-mini as the policy model, WEB-SHEPHERD achieved a 34.55% success rate, which is 10.9 points higher than using GPT-4o-mini as the evaluator, while also being ten times more cost-efficient. In ablation studies, the researchers observed that WEB-SHEPHERD’s performance dropped significantly when checklists or feedback were removed, proving their importance for accurate reward assignments. They also showed that multimodal input, surprisingly, did not always improve performance and sometimes introduced noise.

This research highlights the critical role of detailed process-level rewards in building reliable web agents. The team’s work addresses the core challenge of web navigation—evaluating complex, multi-step actions—and offers a solution that is both scalable and cost-effective. With WEB-SHEPHERD, agents can now receive accurate feedback during navigation, enabling them to make better decisions and complete tasks more effectively.

Check out the Paper and GitHub Page. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit and Subscribe to our Newsletter.

The post This AI Paper Introduces WEB-SHEPHERD: A Process Reward Model for Web Agents with 40K Dataset and 10× Cost Efficiency appeared first on MarkTechPost.

![[The AI Show Episode 150]: AI Answers: AI Roadmaps, Which Tools to Use, Making the Case for AI, Training, and Building GPTs](https://www.marketingaiinstitute.com/hubfs/ep%20150%20cover.png)

![[The AI Show Episode 149]: Google I/O, Claude 4, White Collar Jobs Automated in 5 Years, Jony Ive Joins OpenAI, and AI’s Impact on the Environment](https://www.marketingaiinstitute.com/hubfs/ep%20149%20cover.png)